NetEvents hosted a virtual webinar titled ‘AI with everything the future of artificial intelligence in networking’ on Nov 17 in partnership with IDC.

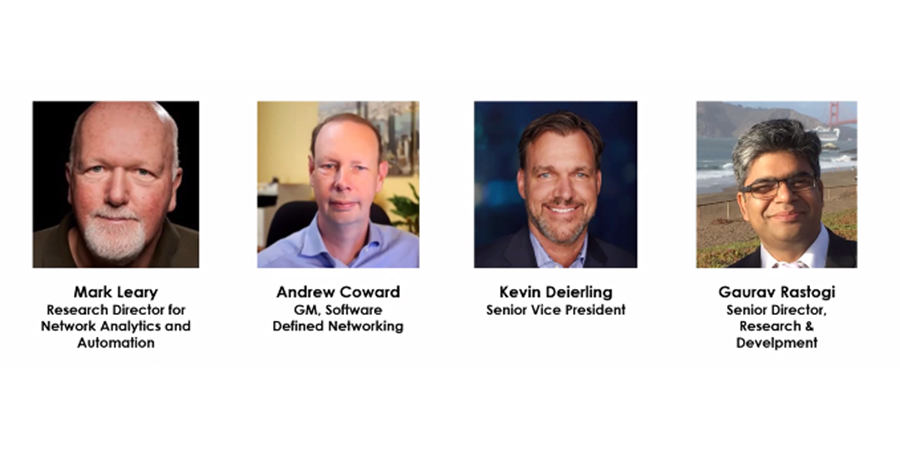

The panelists comprised of Andrew Coward, GM, software-defined networking, IBM; Kevin Deierling, senior vice president, NVIDIA; Gaurav Rastogi, senior director, R&D, VMware

The panel was chaired by Mark Leary, research director for Network Analytics and Automation, IDC.

Mark Leary opened the discussion by trying to shed light on the pressures the networking staff faces due to the complexity of today’s networks. “Most networking staff these days are faced with a lot of new kinds of responsibilities….no longer are they just simply deploying routers and switches and changing configurations and making minor tuning adjustments to the network. They're worried about the digital experience of the user, getting more involved in business outcomes, and doing more evangelism for the network.” He said that it was not about making the network simpler but “making smarter systems that are driven by AI and machine learning.” He also added that with Cloud services, the networking staff can focus more to deliver real high value as opposed to losing time identifying problems and chasing down threats.

Mark pointed out that the top five use cases for AI were in IT automation, supply logistics, smart networking, intelligent process automation, and automated threat intelligence and prevention. He added that an extensive IDC executive survey worldwide with over 2000 respondents revealed that the top three are focused on infrastructure. “They're not focused on smart speakers or robotics, they're trying to figure out how to get better at delivering an infrastructure that's truly resilient in the digital age.”

Mark shared IDC predictions that read, “By 2024, 60% of enterprises will have AI-infused their IT infrastructure operations through AIOps capabilities to perform an increasingly wide range of IT operational processes, expanding from AI-assisted individual management tasks such as event correlation or anomaly detection to automating entire operational processes such as closed-loop incident detection, classification, resolution, and remediation.” He felt that the 60% was a bit low compared to the benefits that AI can bring to enterprise network infrastructure. “I think enterprises, small, medium or large, should infuse AI within their IT infrastructure,” he urged.

Mark said that network complexity, controlling Cloud, staff constraints, threat containment, and network automation were getting bigger. He also pointed out the top 5 barriers to digital resiliency, including latency, performance, security constraints (42.7%), costly public cloud services (42.5%), expanding staff levels and skills (41.2%), lack of consistent automation and analytics (41.1%), multiple data centers and Cloud management silos (39.9%). He also added that governance is as important as development with network automation.

With the stage set on that premise, Mark sought the panelists’ views with the first discussion topic on AI demand. When talking to customers, what is the focus of the discussion around AI in networking and what benefits they are seeking with AI?

Responding to Mark’s inquiry, Andrew Coward said that over the last five, six years, IBM customers spent a lot of time gathering lots of data from different systems - Wi-Fi, wide area network, Cloud, etc, and they couldn't quickly solve problems because they didn't know why or how they were happening. “The more information they've collected, the less clarity they seem to get over exactly what's going on.”

“A lot of the discussions are focused on how do I use AI to separate the noise and focus on what's the problem? The output for machine learning is not more data but is a program or a rule set that can be applied to be actionable. They (AI) don't just instantly know everything. They have to be trained, and they have to build up a base of knowledge and humans are intrinsically part of that before they can be trusted to go and make the changes that they are suggesting.”

Adding to the conversation, Gaurav Rastogi said, “You have to make real-time connections between application and network. Policies need to follow the application and follow the behaviours when there are attacks as about 85% of the breaches are directed to a group of applications. And about 50% of them are about stealing credentials. The attack happens when the breach happens. It is not a single event. This is a sequence of things that goes on and the network is the place where you can see the baselines and the anomalies.”

Sharing his views, Kevin Deierling said, “For someone to engage with activity and AI device and get human response means that the network and all of the connectivity needs to be operating flawlessly. Doing natural language processing, speech to text, visual analytics in real-time to automate and provide all of the networking capabilities automatically in super-low latency is what businesses need to focus on.”

Gaurav Rastogi said, “When the decisions are built on AI, there will be false positives, and there needs to be a way to measure the efficacy of what it is doing and be able to roll back or test the waters. So there is a traffic engineering and test-and-move forward approach that has to be built-in AI. Intuition is very important for trust to grow.”

On what benefits customers seek with AI and networking, Kevin Deierling said, “AI is going to be much bigger than people realize. AI is the most powerful technology force of our time. And companies that realize that and embrace AI and infuse it into their businesses are going to succeed.”

Gaurav Rastogi said AI is going to be a core piece of how to figure out what is your baseline, what the optimizations in the system are, telling customers what they don't know about the network.

Putting his views across, Andrew Coward said, “When an app stops working, the first question is simply did the computer break? Or did the network break? Just getting to that understanding quickly is a critical starting point.”

The second discussion topic focused on the process of AI development.

Sharing his views, Kevin Deierling said, “We're building a foundation that uses AI, more accelerated computing for any application. You need a secure framework to build any AI application that supports all of the AI workloads. We work with partners companies like VMware, IBM, and others to deliver all of those platforms. We're building the framework similarly to cybersecurity. It's just bad corporate hygiene where people inadvertently are running a DevOps environment and they move their dev environment into a production environment and leave their credentials out in the clear, we can detect that quickly with AI without having to have a preset predefined set of rules.”

Andrew Coward said, “Three things for us are critical in how we're advancing AI. The first is the fidelity of the information that comes out of the network. A lot of information is everywhere across the network, just putting that into service assurance has been a critical element. Secondly, making sure we're reaching into the Cloud. The network intelligence has to reach into all those parts. And thirdly, applying AI to the point where we're trusted to automatically correct through closed-loop automation.”

Gaurav Rastogi said three major trends were driving their priorities. “Web application attacks are on the rise, 50% of breaches are on web applications. The attack vectors (60%-70%) are on the credentials and about 20-30% on the misconfigurations. These are our key focus areas driving our priorities on the AI side to build out solutions which will protect the web applications, protect the network and figure out malware.” He said VMware was also focusing on building API's to exchange the data with solutions that could provide an overarching view of what is going on across Clouds and data centers. “So there's a lot of prioritization of making these systems work with each other. And that's how we are prepping up for deeper AI than what we already do today.”

The third discussion topic sought to answer the AI delivery process.

Sharing his thoughts Gaurav Rastogi said, “AI is getting infused in a big way. Every time a service request happens, it goes through an AI. However, a lot of times they don't know exactly what they should be doing. So, internal training is very intrinsic to getting them on board to understand and troubleshoot AI solutions. And lastly, I would say that from a customer standpoint, we always need to provide enough intuition along the way as the decisions are being made.”

Kevin Deierling said, “The other key thing is the complexity and you really can't detect it manually. I think all the telemetry, visualization and root cause analysis for any anomalies or any performance are critical to be able to visualize what's happening and make it simple to consume.

Giving his final thoughts, Andrew Coward said, “We have to have something to work on - what data do you have today? Where is it coming from? Do you have the fidelity of that data from all the different sources that are needed? Understanding the language and being able to use that and import that as a data point becomes interesting. So the fidelity of the source of information is the critical piece. The other part is what are you going to do? How do you act on a change that you need to make in a network? Do you have the right provisioning tools to go push that change? So, once you've got that baseline, then you can start applying AI knowing that you've got enough data to process and the ability to execute on without spending hours doing it.”